Artificial intelligence can write emails, spot patterns in medical scans, and answer questions in seconds. But behind that speed is a growing energy bill. Every new model, every extra server, and every AI feature added to daily life puts more strain on the hardware that keeps it all running.

Now, researchers at the University of Cambridge say they may have found a way to make AI hardware work much more like the human brain, and in doing so, use far less electricity. Their newly developed device is still at an early stage, but it points to a future where smarter machines do not necessarily need ever larger amounts of power.

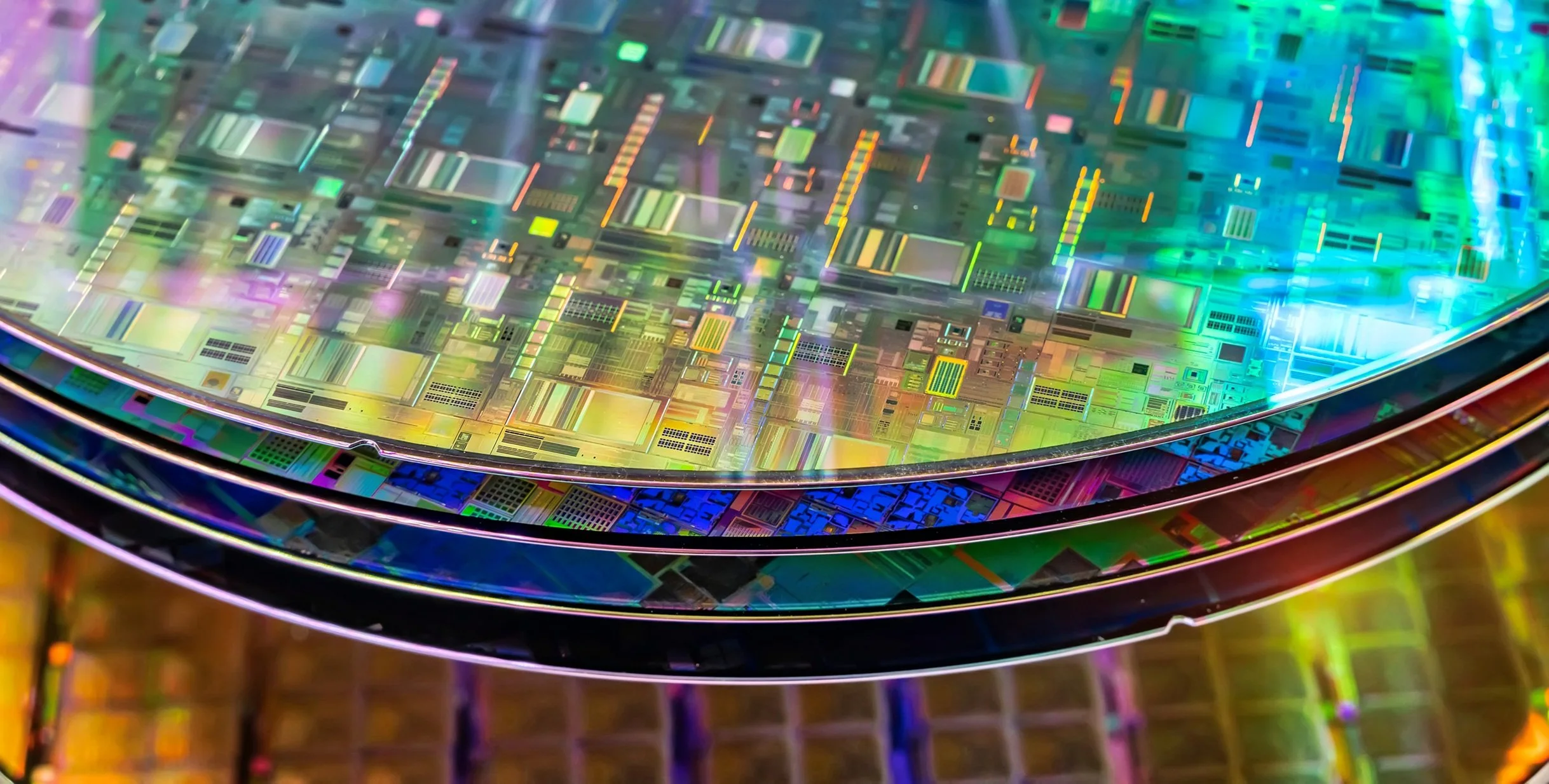

Image Credit: asharkyu via Shutterstock / HDR tune by Universal-Sci

Why does AI need so much electricity in the first place?

Much of today’s AI runs on conventional computer hardware that separates memory from processing. In simple terms, that means information is constantly being moved back and forth between where it is stored and where it is handled. That repeated shuttling uses a lot of energy, especially when systems are processing huge amounts of data.

The Cambridge team is working on an alternative known as neuromorphic computing. The idea is to build hardware that behaves more like the brain, where information can be stored and processed in the same place. That can make the system much more efficient and potentially more flexible as well.

According to the researchers, this type of design could reduce energy use by as much as 70%. That is one reason scientists and chip designers are paying close attention to components called memristors, which are designed to imitate how connections between neurons strengthen or weaken over time.

Lead author Dr Babak Bakhit from Cambridge’s Department of Materials Science and Metallurgy put the challenge plainly: "Energy consumption is one of the key challenges in current AI hardware." He added that solving it requires devices with "extremely low currents, excellent stability, outstanding uniformity across switching cycles and devices, and the ability to switch between many distinct states."

In other words, the goal is not just to make AI chips smaller. It is to make them behave in a steadier, more brain-like way while using much less power.

What did the researchers change, and why does it matter?

At the heart of the new work is a material called hafnium oxide. This material is already familiar in electronics, but the Cambridge researchers altered it in a way that gave it very different behavior.

Most existing memristors work by forming tiny conductive filaments inside a metal oxide material. Those filaments can help the device switch between states, but they are often unpredictable. They also tend to need relatively high voltages, which makes them less appealing for large-scale, low-energy computing.

The Cambridge team took a different route. By adding strontium and titanium to a hafnium-based thin film and growing it in two stages, they created tiny internal electronic gates known as p-n junctions where the layers meet. Rather than relying on filaments that grow and break apart, the device changes resistance by adjusting the energy barrier at that interface.

That may sound technical, but the practical point is straightforward: the switching becomes smoother and more reliable.

Bakhit explained the advantage directly: "Filamentary devices suffer from random behaviour." He said, "But because our devices switch at the interface, they show outstanding uniformity from cycle to cycle and from device to device."

That consistency matters. For AI hardware to be useful outside the lab, it cannot behave one way in one device and another way in the next. It needs to respond predictably over and over again.

The team reports that their hafnium-based memristors achieved switching currents about a million times lower than some conventional oxide-based devices. They also demonstrated hundreds of distinct, stable conductance levels, which is important for analogue in-memory computing. Instead of simply storing a 0 or a 1, devices like this may be able to represent a much wider range of values, closer to the way biological systems process information.

Could this actually lead to brain-inspired AI chips?

The early signs are promising, but the technology is not ready for mass production yet.

In laboratory tests, the devices withstood tens of thousands of switching cycles and held their programmed states for around a day. They also reproduced a key biological learning rule called spike-timing dependent plasticity. That is the process by which neurons strengthen or weaken their connections depending on the timing of incoming signals.

That behavior is important because future AI hardware may need to do more than store information. It may need to learn and adapt in real time, in a way that is more efficient than today’s conventional systems. As Bakhit put it: "These are the properties you need if you want hardware that can learn and adapt, rather than just store bits."

Still, there is a major obstacle. The current fabrication method requires temperatures of about 700°C, which is higher than what standard semiconductor manufacturing usually tolerates. Bakhit described that as the main challenge facing the process right now. The team is now trying to lower that temperature so the technology can fit more easily into existing chip-making methods.

Even with that hurdle, the researchers believe the concept has real potential. If they can make the process compatible with standard industry workflows, the devices could eventually be integrated into chip-scale systems.

There is also a human side to the story. Bakhit said the project took years of unsuccessful attempts before it began to work. The breakthrough came late last year after he adjusted the two-stage deposition method and added oxygen only after the first layer had been grown. That change appears to have helped create the internal structure needed for the device to operate in this new way.

The research was published in Science Advances and supported in part by the Swedish Research Council, the Royal Academy of Engineering, the Royal Society, and UK Research and Innovation. A patent application has also been filed by Cambridge Enterprise, the university’s innovation arm.

For now, this is still a research result, not a finished commercial product. But it highlights a larger shift in how scientists are thinking about AI. As models become more capable, the question is no longer only what AI can do. It is also how much energy we are willing to spend to make it happen.

The Cambridge team’s answer is not to push current hardware harder. It is to rethink the hardware itself. And if that approach succeeds, the future of AI may depend less on brute force and more on learning from the system that still sets the standard for efficient intelligence: the human brain.

If you are interested in more details about the research be sure to check out the paper published in the peer-reviewed science journal Science Advances, listed below.

Sources and further reading:

HfO2-based memristive synapses with asymmetrically extended p-n heterointerfaces for highly energy-efficient neuromorphic hardware - (Science Advances)

How AI can help us in the search for extraterrestrial life - (Universal-Sci)

Ethics of AI: how should we treat rational, sentient robots – if they existed? - (Universal-Sci)

Will AI rescue us from a world without working antibiotics? - (Universal-Sci)

Too busy to follow science news during the week? - Consider subscribing to our (free) newsletter - (Universal-Sci Weekly) - and get the 5 most interesting science articles of the week in your inbox

FEATURED ARTICLES: